Because the conversations around GenAI tend to be very binary, some might believe my posts imply that I am against AI. This is very much not the case. I do believe it is a useful tool. 80% accuracy is not high enough for all purposes, but humans often don’t agree with their own output that frequently. Chat GPT can write an outline much much faster than I can. It can skim an article much faster than I can. It can write multiple choice questions and reponse options, edit for spelling and grammatical errors, double check me for correctness, proofread, and plan. AI is able to cut hours off of many tasks. AI is also capable of scoring short essays as consistently as human raters.

So what is my personal integration plan for AI automation to leverage its strengths and weaknesses? I ask myself the following questions when using AI.

Will there be a human review of the output before reaching the end-user?

The first question you ask yourself should be about how you are using the output. Is this a direct-to-consumer pipeline or part of a drafting and revision process documented and overseen by experts?

If this is part of a drafting and revision process with expert reviewers, you’re good to go. Use this as a drafting tool, and be sure it does not make major changes to the text when asked to check for gramatical errors.

If the output will be seen directly by consumers with no review, you need to think about who will be using the output and how they are going to implement the tool. If the end user cannot claim the responsibility for the burden of truth, you should not skip the human in the loop.

Does the training data reflect your personal views and those of your target audience?

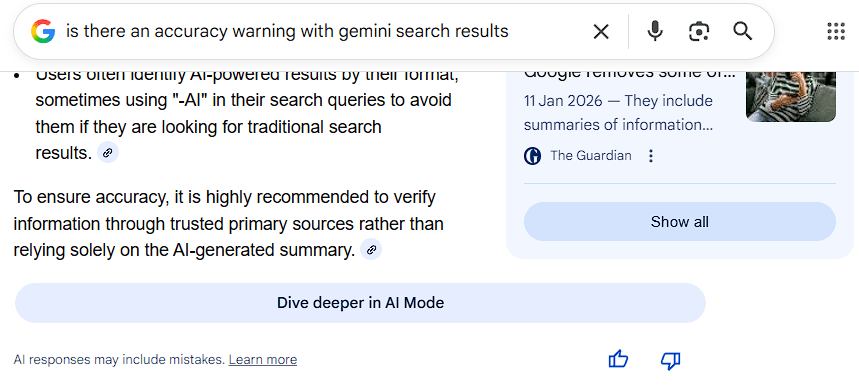

If you and your clients love large corporations, Peter Thiel is your personal hero, and party with Elon Musk on the weekends, you are probably doing ok. If not, you should think about who this product is intended to serve. It has been reported on several occassions that Musk retrained Grok after the ai went too “woke” for his conservative fan base.

Direct to consumer pipelines may not be the best idea if you are not a member of the technocracy. While Google has apologized for this event. Some news sources claimed this is due to human error and not AI, while others attributed the notice to an AI bot. Either way, your AI needs the same level of oversight as your interns. F*** ups this epic will cost you clients unless you have a monopoly or oligopoly.

How important is the factual accuracy of the output?

If the stakes are higher, the percent accuracy needs to be higher. If the tool is directly related to high stakes exam preparation, physical health, mental health, investment, spending, or intended to facilitate any other major decisions, you need to consider whether 85% maximum accuracy is high enough.

General Low-Stakes output

For newsletter type announcements, personalized event invitations from a template, or rejection emails, you are probably good to go. These are low stakes activities that are not likely to impact major decisions made by the end-user, and may help to boost engagement.

Investments & Spending (High Stakes)

Here we have to recall the importance of fiduciary responsibility in model construction. The product (LLM output) must financially benefit the investors. It is highly likely the LLM will encourage investment in companies owned by their investors or those who are paying for promotion.

Corporate Decisions (High Stakes)

Decisions such as hiring and spending should be reviewed by humans. As a psychometrician I can say that LLMs have little understanding of learning, application of knowledge, or the details of many more technical roles.

I ran a test across multiple trials asking an AI agent to rank resumes. When dumped together in the input, the AI did not rank the candidates by skills, but rather by the order given. In the majority of trials, resume 1 was the top candidate and resume 11 was the 11th candidate. There are of course different analyses that can be performed, but a hard coded search for key-terms could provide a count of the number of desired skills a candidate included on their resume with significantly higher consistency.

Also, I have done several AI interviews for work as a math expert or statistical analyst, and the interviews are typically poorly handled. If I state the correct response using terminology different from the expected input, the interview continues with the AI attempting to explain the concepts to me while I become increasingly amused… or confused. This includes mathematically equivalent expressions, and terminology that is equivalent by definition. More than once I believed that I stated the information incorrectly after becoming trapped in a loop because I learned the same constructs under different names. As it turns out, my training in education research has simply provided me a different vocabulary and the AI agent being emplloyed is not significantly well versed on the topic to work in another vocabulary framework.

Learning materials (Low or High Stakes)

Lower grade levels tend to have more representative training data and produce better results. For a specific classroom where a teacher will oversee learning, determine if the output is appropriate for their students, and ensure the curriculum content and coverage, this is probably fine.

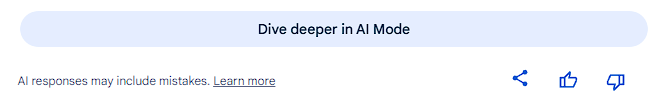

It may also be okay to use generative AI without review if the content is a learning supplement where the students will have knowledge from the textbook or teacher and the supplement is delivered with notice that the materials may show inaccuracy. Regarding generative AI integration in higher education, Kortemeyer et al. (2024) reports students find the Ethel Chatbot as being helpful despite sometimes providing incorrect responses.

On the otherhand, if you are generating the students sole learning materials you should think twice before taking the human out of the loop. While RAG models can significantly improve the output, there are no guarantees. In my own experience, LLMs struggle with the connections between topics, understanding causal relationships, and creating explanations in general. Additionally, the LLM’s training varies significantly across topics within the same course or subject.

Assessment Materials (Low or High Stakes)

Psychometrics is an entire field of science related to assessment and survey development. It is not flashy like physics, so we don’t get specials on the Discovery Channel. In fact, I have not met a single person that knows what I do that is not also a psychometrician. What do we do? We measure qualities that cannot be directly observed like math ability, psychological traits, or learning aptitude. We research other instruments, build new ones, make sure they measure what they should, equate them for comparison, refine them to get as much information as possible out of the smalest number of questions. We also measure and compare performance on those tests.

And from a psychometrician’s perspective, you shouldn’t use AI to write tests. AI generated assessments tend to focus on rote memorization rather than applied skills, and they break quite a few rules of assessment design. I will leave you with an example, but I will save the in depth analysis for another day. It takes multiple API calls to get one useful question, and they still need a bit of human review.

When automating the generation of testing materials, LLMs frequently wrote questions which asked “why” or “what” but the provided response was a restatement of the question, saying that it happened without additional detail.

Here is a question generated by ChatGPT:

What makes hydrogen bonds stronger than typical dipole-dipole interactions?

A. Larger atomic size

B. Greater electron shielding

C. Strong attraction between δ⁺ hydrogen and lone pairs

D. Presence of ionic charges

It seems okay at a glance, but this would not make it onto an exam in my own classroom. While C is the “correct” response, it is not a strong answer to the question asked. It fails psychometrically for several reasons. Primarily, rather than explain what makes hydrogen bonds stronger, it states that the elements of a hydrogen bond are strong. A more correct response might include distance between charges, localized charge, or directional alignment rather than a restatement of the question. Other frequent generative AI issues appear here where the correct response is double the length of the other options, the correct response uses more advanced notation or vocabulary, and it is the only response option that includes a leading word from the question.

The questions and materials generated by the LLMs also struggle to apply learning in new contexts. While a RAG model can direct more accurate content, the LLM probaballistically determines the next words. If your input is regarding any specific examples, the questions for that topic will be generated from those specific examples rather than building new questions with new contexts, creating exams based on rote memorization rather than the application of learning into new contexts which is the more highly preferred method for testing students’ knowledge.

If you are preparing course materials for upper grades, tests that will include applied knowledge, or primary materials for first time learners, you should include human review or your students will most likely arrive underprepared for the exam.

News Reviews

The British Broadcasting Corporation (BBC) and the European Broadcasting Union (EBU) conducted a study in 2025. According to the BBC, they found that AI summaries had “errors “significant issues” in 45% of the summaries and 20% contained “major accuracy issues.” Those faulty summaries cost at least one European journalist his credibility. According to a recent article from the Guardian reveals Peter Vandermeersch was suspended for misquoting or misattributing quotes to individuals after not checking the AI tools he was using for news summaries.

My choice?

Treat AI like the emerging science it is. When Radium was first introduced, it was branded as a cure-all by snake oil salesmen. People added radium to their drinking water, chocolate, bread… That caused a lot of problems. But after a lot of careful science, its medical purposes were uncovered.

Treat your AI like it’s the CEO’s 16yo nephew there on a summer internship. Let him do the simple repetitive tasks, write a rough draft, check for grammatical errors. And leave the thinking to the grown-ups.